LightGBMModel¶

Overview¶

The ads.model.framework.lightgbm_model.LightGBMModel class in ADS is designed to allow you to rapidly get a LightGBM model into production. The .prepare() method creates the model artifacts that are needed to deploy a functioning model without you having to configure it or write code. However, you can customize the required score.py file.

The .verify() method simulates a model deployment by calling the load_model() and predict() methods in the score.py file. With the .verify() method, you can debug your score.py file without deploying any models. The .save() method deploys a model artifact to the model catalog. The .deploy() method deploys a model to a REST endpoint.

The following steps take your trained LightGBM model and deploy it into production with a few lines of code.

The LightGBMModel module in ADS supports serialization for models generated from both the Training API using lightgbm.train() and the Scikit-Learn API using lightgbm.LGBMClassifier(). Both of these interfaces are defined by LightGBM.

The Training API in LightGBM contains training and cross-validation routines. The Dataset class is an internal data structure that is used by LightGBM when using the lightgbm.train() method. You can also create LightGBM models using the Scikit-Learn Wrapper interface. The LightGBMModel class handles the differences between the LightGBM Training and SciKit-Learn APIs seamlessly.

Create LightGBM Model

import lightgbm as lgb

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

seed = 42

X, y = make_classification(n_samples=10000, n_features=15, n_classes=2, flip_y=0.05)

trainx, testx, trainy, testy = train_test_split(X, y, test_size=30, random_state=seed)

model = lgb.LGBMClassifier(

n_estimators=100, learning_rate=0.01, random_state=42

)

model.fit(

trainx,

trainy,

)

Prepare Model Artifact¶

from ads.common.model_metadata import UseCaseType

from ads.model.framework.lightgbm_model import LightGBMModel

artifact_dir = tempfile.mkdtemp()

lightgbm_model = LightGBMModel(estimator=model, artifact_dir=artifact_dir)

lightgbm_model.prepare(

inference_conda_env="generalml_p38_cpu_v1",

training_conda_env="generalml_p38_cpu_v1",

X_sample=trainx,

y_sample=trainy,

use_case_type=UseCaseType.BINARY_CLASSIFICATION,

)

Instantiate a ads.model.framework.lightgbm_model.LightGBMModel() object with a LightGBM model. Each instance accepts the following parameters:

artifact_dir: str: Artifact directory to store the files needed for deployment.auth: (Dict, optional): Defaults toNone. The default authentication is set using theads.set_authAPI. To override the default, useads.common.auth.api_keys()orads.common.auth.resource_principal()and create the appropriate authentication signer and the**kwargsrequired to instantiate theIdentityClientobject.estimator: (Callable): Trained LightGBM model using the Training API or the Scikit-Learn Wrapper interface.properties: (ModelProperties, optional): Defaults toNone. TheModelPropertiesobject required to save and deploy a model.

The properties is an instance of the ModelProperties class and has the following predefined fields:

bucket_uri: strcompartment_id: strdeployment_access_log_id: strdeployment_bandwidth_mbps: intdeployment_instance_count: intdeployment_instance_shape: strdeployment_log_group_id: strdeployment_predict_log_id: strdeployment_memory_in_gbs: Union[float, int]deployment_ocpus: Union[float, int]inference_conda_env: strinference_python_version: stroverwrite_existing_artifact: boolproject_id: strremove_existing_artifact: booltraining_conda_env: strtraining_id: strtraining_python_version: strtraining_resource_id: strtraining_script_path: str

By default, properties is populated from the environment variables when not specified. For example, in notebook sessions the environment variables are preset and stored in project id (PROJECT_OCID) and compartment id (NB_SESSION_COMPARTMENT_OCID). So properties populates these environment variables, and uses the values in methods such as .save() and .deploy(). Pass in values to overwrite the defaults. When you use a method that includes an instance of properties, then properties records the values that you pass in. For example, when you pass inference_conda_env into the .prepare() method, then properties records the value. To reuse the properties file in different places, you can export the properties file using the .to_yaml() method then reload it into a different machine using the .from_yaml() method.

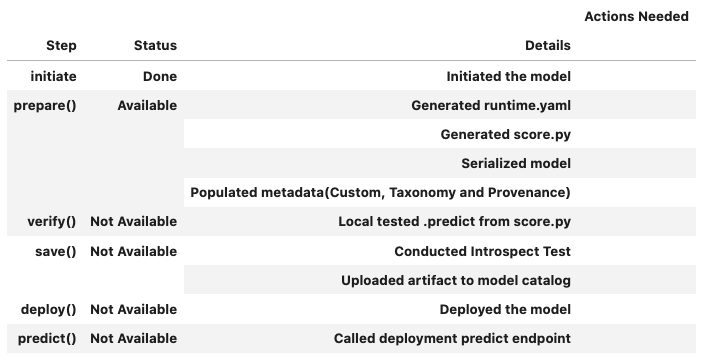

Summary Status¶

You can call the .summary_status() method after a model serialization instance such as GenericModel, SklearnModel, TensorFlowModel, or PyTorchModel is created. The .summary_status() method returns a Pandas dataframe that guides you through the entire workflow. It shows which methods are available to call and which ones aren’t. Plus it outlines what each method does. If extra actions are required, it also shows those actions.

The following image displays an example summary status table created after a user initiates a model instance. The table’s Step column displays a Status of Done for the initiate step. And the Details column explains what the initiate step did such as generating a score.py file. The Step column also displays the prepare(), verify(), save(), deploy(), and predict() methods for the model. The Status column displays which method is available next. After the initiate step, the prepare() method is available. The next step is to call the prepare() method.

Register Model¶

>>> # Register the model

>>> model_id = lightgbm_model.save()

Start loading model.joblib from model directory /tmp/tmphl0uhtbb ...

Model is successfully loaded.

['runtime.yaml', 'model.joblib', 'score.py', 'input_schema.json']

'ocid1.datasciencemodel.oc1.xxx.xxxxx'

Deploy and Generate Endpoint¶

# Deploy and create an endpoint for the LightGBM model

lightgbm_model.deploy(

display_name="LightGBM Model For Classification",

deployment_log_group_id="ocid1.loggroup.oc1.xxx.xxxxx",

deployment_access_log_id="ocid1.log.oc1.xxx.xxxxx",

deployment_predict_log_id="ocid1.log.oc1.xxx.xxxxx",

# Shape config details mandatory for flexible shapes:

# deployment_instance_shape="VM.Standard.E4.Flex",

# deployment_ocpus=<number>,

# deployment_memory_in_gbs=<number>,

)

print(f"Endpoint: {lightgbm_model.model_deployment.url}")

# Output: "Endpoint: https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx"

Run Prediction against Endpoint¶

# Generate prediction by invoking the deployed endpoint

lightgbm_model.predict(testx)['prediction']

[1,0,...,1]

Run Prediction with oci raw-request command¶

Model deployment endpoints can be invoked with the OCI-CLI. The below examples invoke a model deployment with the CLI with different types of payload: json, numpy.ndarray, pandas.core.frame.DataFrame or dict.

json payload example¶

>>> # Prepare data sample for prediction

>>> data = testx[[11]]

>>> data

array([[ 0.59990614, 0.95516275, -1.22366985, -0.89270887, -1.14868768,

-0.3506047 , 0.28529227, 2.00085413, 0.31212668, 0.39411511,

0.87301082, -0.01743923, -1.15089633, 1.03461823, 1.50228029]])

Use printed output of the data and endpoint to invoke prediction with raw-request command in terminal:

export uri=https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx/predict

export data='{"data": [[ 0.59990614, 0.95516275, ... , 1.50228029]]}'

oci raw-request \

--http-method POST \

--target-uri $uri \

--request-body "$data"

numpy.ndarray payload example¶

>>> # Prepare data sample for prediction

>>> from io import BytesIO

>>> import base64

>>> import numpy as np

>>> data = testx[[10]]

>>> np_bytes = BytesIO()

>>> np.save(np_bytes, data, allow_pickle=True)

>>> data = base64.b64encode(np_bytes.getvalue()).decode("utf-8")

>>> print(data)

k05VTVBZAQB2AHsnZGVzY......D1cJ+D8=

Use printed output of base64 data and endpoint to invoke prediction with raw-request command in terminal:

export uri=https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx/predict

export data='{"data":"k05VTVBZAQB2AHsnZGVzY......4UdN0L8=", "data_type": "numpy.ndarray"}'

oci raw-request \

--http-method POST \

--target-uri $uri \

--request-body "$data"

pandas.core.frame.DataFrame payload example¶

>>> # Prepare data sample for prediction

>>> import pandas as pd

>>> df = pd.DataFrame(testx[[10]])

>>> print(json.dumps(df.to_json())

"{\"0\":{\"0\":0.4133005141},\"1\":{\"0\":0.676589266},...,\"14\":{\"0\":-0.2547168443}}"

Use printed output of DataFrame data and endpoint to invoke prediction with raw-request command in terminal:

export uri=https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx/predict

export data='{"data":"{\"0\":{\"0\":0.4133005141},...,\"14\":{\"0\":-0.2547168443}}","data_type":"pandas.core.frame.DataFrame"}'

oci raw-request \

--http-method POST \

--target-uri $uri \

--request-body "$data"

dict payload example¶

>>> # Prepare data sample for prediction

>>> import pandas as pd

>>> df = pd.DataFrame(testx[[11]])

>>> print(json.dumps(df.to_dict()))

{"0": {"0": 0.5999061426438217}, "1": {"0": 0.9551627492226553}, ...,"14": {"0": 1.5022802918908846}}

Use printed output of dict data and endpoint to invoke prediction with raw-request command in terminal:

export uri=https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx/predict

export data='{"data": {"0": {"0": 0.5999061426438217}, ...,"14": {"0": 1.5022802918908846}}}'

oci raw-request \

--http-method POST \

--target-uri $uri \

--request-body "$data"

Expected output of raw-request command¶

{

"data": {

"prediction": [

1

]

},

"headers": {

"Connection": "keep-alive",

"Content-Length": "18",

"Content-Type": "application/json",

"Date": "Wed, 07 Dec 2022 18:31:05 GMT",

"X-Content-Type-Options": "nosniff",

"opc-request-id": "B67EED723ADD43D7BBA1AD5AFCCBD0C6/03F218FF833BC8A2D6A5BDE4AB8B7C12/6ACBC4E5C5127AC80DA590568D628B60",

"server": "uvicorn"

},

"status": "200 OK"

}

Example¶

from ads.model.framework.lightgbm_model import LightGBMModel

from ads.common.model_metadata import UseCaseType

import lightgbm as lgb

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

import tempfile

seed = 42

# Create a classification dataset

X, y = make_classification(n_samples=10000, n_features=15, n_classes=2, flip_y=0.05)

trainx, testx, trainy, testy = train_test_split(X, y, test_size=30, random_state=seed)

# Train LGBM model

model = lgb.LGBMClassifier(n_estimators=100, learning_rate=0.01, random_state=42)

model.fit(

trainx,

trainy,

)

# Prepare Model Artifact for LightGBM model

artifact_dir = tempfile.mkdtemp()

lightgbm_model = LightGBMModel(estimator=model, artifact_dir=artifact_dir)

lightgbm_model.prepare(

inference_conda_env="generalml_p38_cpu_v1",

training_conda_env="generalml_p38_cpu_v1",

X_sample=trainx,

y_sample=trainy,

force_overwrite=True,

use_case_type=UseCaseType.BINARY_CLASSIFICATION,

)

# Check if the artifacts are generated correctly.

# The verify method invokes the ``predict`` function defined inside ``score.py`` in the artifact_dir

lightgbm_model.verify(testx[:10])["prediction"]

# Register the model

model_id = lightgbm_model.save(display_name="LightGBM Model")

# Deploy and create an endpoint for the LightGBM model

lightgbm_model.deploy(

display_name="LightGBM Model For Classification",

deployment_log_group_id="ocid1.loggroup.oc1.xxx.xxxxx",

deployment_access_log_id="ocid1.log.oc1.xxx.xxxxx",

deployment_predict_log_id="ocid1.log.oc1.xxx.xxxxx",

)

print(f"Endpoint: {lightgbm_model.model_deployment.url}")

# Generate prediction by invoking the deployed endpoint

lightgbm_model.predict(testx)["prediction"]

# To delete the deployed endpoint uncomment the line below

# lightgbm_model.delete_deployment(wait_for_completion=True)