PyTorchModel¶

Overview¶

The ads.model.framework.pytorch_model.PyTorchModel class in ADS is designed to allow you to rapidly get a PyTorch model into production. The .prepare() method creates the model artifacts that are needed to deploy a functioning model without you having to configure it or write code. However, you can customize the required score.py file.

The .verify() method simulates a model deployment by calling the load_model() and predict() methods in the score.py file. With the .verify() method, you can debug your score.py file without deploying any models. The .save() method deploys a model artifact to the model catalog. The .deploy() method deploys a model to a REST endpoint.

The following steps take your trained PyTorch model and deploy it into production with a few lines of code.

Create a PyTorch Model

Load a ResNet18 model and put it into evaluation mode.

import torch

import torchvision

model = torchvision.models.resnet18(pretrained=True)

model.eval()

Prepare Model Artifact¶

Save as TorchScript¶

Added in version 2.6.9.

Serializing model in TorchScript program by setting use_torch_script to True, you can load the model and run inference without defining the model class.

from ads.common.model_metadata import UseCaseType

from ads.model.framework.pytorch_model import PyTorchModel

import tempfile

# Prepare the model

artifact_dir = "pytorch_model_artifact"

pytorch_model = PyTorchModel(model, artifact_dir=artifact_dir)

pytorch_model.prepare(

inference_conda_env="pytorch110_p38_cpu_v1",

training_conda_env="pytorch110_p38_cpu_v1",

use_case_type=UseCaseType.IMAGE_CLASSIFICATION,

force_overwrite=True,

use_torch_script=True,

)

# You don't need to modify the score.py generated. The model can be loaded without defining the model class.

# More info here - https://pytorch.org/tutorials/beginner/saving_loading_models.html#export-load-model-in-torchscript-format

Save state_dict¶

from ads.common.model_metadata import UseCaseType

from ads.model.framework.pytorch_model import PyTorchModel

import tempfile

# Prepare the model

artifact_dir = "pytorch_model_artifact"

pytorch_model = PyTorchModel(model, artifact_dir=artifact_dir)

pytorch_model.prepare(

inference_conda_env="pytorch110_p38_cpu_v1",

training_conda_env="pytorch110_p38_cpu_v1",

use_case_type=UseCaseType.IMAGE_CLASSIFICATION,

force_overwrite=True,

)

# The score.py generated requires you to create the class instance of the Model before the weights are loaded.

# More info here - https://pytorch.org/tutorials/beginner/saving_loading_models.html#save-load-state-dict-recommended

Open pytorch_model_artifact/score.py and edit the code to instantiate the model class. The edits are highlighted -

import os

import sys

from functools import lru_cache

import torch

import json

from typing import Dict, List

import numpy as np

import pandas as pd

from io import BytesIO

import base64

import logging

import torchvision

the_model = torchvision.models.resnet18()

model_name = 'model.pt'

"""

Inference script. This script is used for prediction by scoring server when schema is known.

"""

@lru_cache(maxsize=10)

def load_model(model_file_name=model_name):

"""

Loads model from the serialized format

Returns

-------

model: a model instance on which predict API can be invoked

"""

model_dir = os.path.dirname(os.path.realpath(__file__))

if model_dir not in sys.path:

sys.path.insert(0, model_dir)

contents = os.listdir(model_dir)

if model_file_name in contents:

print(f'Start loading {model_file_name} from model directory {model_dir} ...')

model_state_dict = torch.load(os.path.join(model_dir, model_file_name))

print(f"loading {model_file_name} is complete.")

else:

raise Exception(f'{model_file_name} is not found in model directory {model_dir}')

# User would need to provide reference to the TheModelClass and

# construct the the_model instance first before loading the parameters.

# the_model = TheModelClass(*args, **kwargs)

try:

the_model.load_state_dict(model_state_dict)

except NameError as e:

raise NotImplementedError("TheModelClass instance must be constructed before loading the parameters. Please modify the load_model() function in score.py." )

except Exception as e:

raise e

the_model.eval()

print("Model is successfully loaded.")

return the_model

Instantiate a PyTorchModel() object with a PyTorch model. Each instance accepts the following parameters:

artifact_dir: str. Artifact directory to store the files needed for deployment.auth: (Dict, optional): Defaults toNone. The default authentication is set using theads.set_authAPI. To override the default, useads.common.auth.api_keys()orads.common.auth.resource_principal()and create the appropriate authentication signer and the**kwargsrequired to instantiate theIdentityClientobject.estimator: Callable. Any model object generated by the PyTorch framework.properties: (ModelProperties, optional). Defaults toNone. TheModelPropertiesobject required to save and deploy model.

The properties is an instance of the ModelProperties class and has the following predefined fields:

bucket_uri: strcompartment_id: strdeployment_access_log_id: strdeployment_bandwidth_mbps: intdeployment_instance_count: intdeployment_instance_shape: strdeployment_log_group_id: strdeployment_predict_log_id: strdeployment_memory_in_gbs: Union[float, int]deployment_ocpus: Union[float, int]inference_conda_env: strinference_python_version: stroverwrite_existing_artifact: boolproject_id: strremove_existing_artifact: booltraining_conda_env: strtraining_id: strtraining_python_version: strtraining_resource_id: strtraining_script_path: str

By default, properties is populated from the environment variables when not specified. For example, in notebook sessions the environment variables are preset and stored in project id (PROJECT_OCID) and compartment id (NB_SESSION_COMPARTMENT_OCID). So properties populates these environment variables, and uses the values in methods such as .save() and .deploy(). Pass in values to overwrite the defaults. When you use a method that includes an instance of properties, then properties records the values that you pass in. For example, when you pass inference_conda_env into the .prepare() method, then properties records the value. To reuse the properties file in different places, you can export the properties file using the .to_yaml() method then reload it into a different machine using the .from_yaml() method.

Verify Changes to Score.py¶

Download and load an image for prediction

# Download an image

import urllib.request

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

try: urllib.URLopener().retrieve(url, filename)

except: urllib.request.urlretrieve(url, filename)

# Preprocess the image and convert to torch.Tensor

from PIL import Image

from torchvision import transforms

input_image = Image.open(filename)

preprocess = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

])

input_tensor = preprocess(input_image)

input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model

Verify score.py changes by running inference locally

>>> prediction = pytorch_model.verify(input_batch)["prediction"]

>>> import numpy as np

>>> np.argmax(prediction)

258

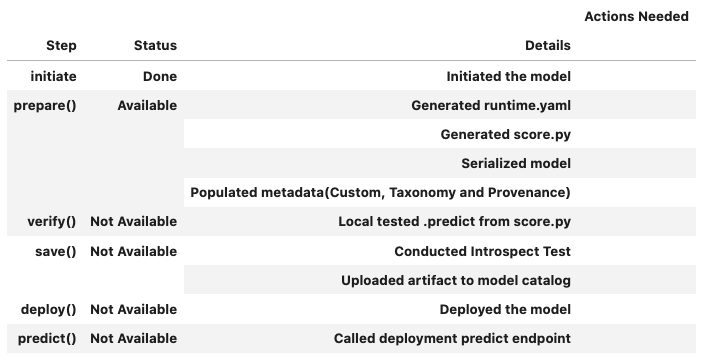

Summary Status¶

You can call the .summary_status() method after a model serialization instance such as GenericModel, SklearnModel, TensorFlowModel, or PyTorchModel is created. The .summary_status() method returns a Pandas dataframe that guides you through the entire workflow. It shows which methods are available to call and which ones aren’t. Plus it outlines what each method does. If extra actions are required, it also shows those actions.

The following image displays an example summary status table created after a user initiates a model instance. The table’s Step column displays a Status of Done for the initiate step. And the Details column explains what the initiate step did such as generating a score.py file. The Step column also displays the prepare(), verify(), save(), deploy(), and predict() methods for the model. The Status column displays which method is available next. After the initiate step, the prepare() method is available. The next step is to call the prepare() method.

Register Model¶

>>> # Register the model

>>> model_id = pytorch_model.save()

Start loading model.pt from model directory /tmp/tmpf11gnx9c ...

loading model.pt is complete.

Model is successfully loaded.

['.score.py.swp', 'score.py', 'model.pt', 'runtime.yaml']

'ocid1.datasciencemodel.oc1.xxx.xxxxx'

Deploy and Generate Endpoint¶

# Deploy and create an endpoint for the PyTorch model

pytorch_model.deploy(

display_name="PyTorch Model For Classification",

deployment_log_group_id="ocid1.loggroup.oc1.xxx.xxxxx",

deployment_access_log_id="ocid1.log.oc1.xxx.xxxxx",

deployment_predict_log_id="ocid1.log.oc1.xxx.xxxxx",

# Shape config details mandatory for flexible shapes:

# deployment_instance_shape="VM.Standard.E4.Flex",

# deployment_ocpus=<number>,

# deployment_memory_in_gbs=<number>,

)

print(f"Endpoint: {pytorch_model.model_deployment.url}")

# Output: "Endpoint: https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx"

Run Prediction against Endpoint¶

# Download an image

import urllib.request

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

try: urllib.URLopener().retrieve(url, filename)

except: urllib.request.urlretrieve(url, filename)

# Preprocess the image and convert to torch.Tensor

from PIL import Image

from torchvision import transforms

input_image = Image.open(filename)

preprocess = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

])

input_tensor = preprocess(input_image)

input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model

# Generate prediction by invoking the deployed endpoint

prediction = pytorch_model.predict(input_batch)['prediction']

print(np.argmax(prediction))

258

Predict with Image¶

Added in version 2.6.7.

Predict Image by passing a uri, which can be http(s), local path, or other URLs

(e.g. starting with “oci://”, “s3://”, and “gcs://”), of the image or a PIL.Image.Image object

using the image argument in predict() to predict a single image.

The image will be converted to a tensor and then serialized so it can be passed to the endpoint.

You can catch the tensor in score.py to perform further transformation.

Note

The payload size limit is 10 MB. Read more about invoking a model deployment here.

Given the size limitation, the example below is with resized image. To pass an image and invoke prediction, additional code inside score.py is required to preprocess the data. Open pytorch_model_artifact/score.py and update the pre_inference() method. The edits are highlighted:

def pre_inference(data, input_schema_path):

"""

Preprocess json-serialized data to feed into predict function.

Parameters

----------

data: Data format as expected by the predict API of the core estimator.

input_schema_path: path of input schema.

Returns

-------

data: Data format after any processing.

"""

data = deserialize(data, input_schema_path)

import torchvision.transforms as transforms

preprocess = transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.Normalize(

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]

),

])

input_tensor = preprocess(data)

input_batch = input_tensor.unsqueeze(0)

return input_batch

Save score.py and verify prediction works:

>>> uri = ("https://github.com/oracle-samples/oci-data-science-ai-samples/tree/master/model_deploy_examples/images/dog_resized.jpg")

>>> prediction = pytorch_model.verify(image=uri)["prediction"]

>>> import numpy as np

>>> np.argmax(prediction)

258

Re-deploy model with updated score.py:

pytorch_model.deploy(

display_name="PyTorch Model For Classification",

deployment_log_group_id="ocid1.loggroup.oc1.xxx.xxxxxx",

deployment_access_log_id="ocid1.log.oc1.xxx.xxxxxx",

deployment_predict_log_id="ocid1.log.oc1.xxx.xxxxxx",

)

Run prediction with the image provided:

uri = ("https://github.com/oracle-samples/oci-data-science-ai-samples/tree/master/model_deploy_examples/images/dog_resized.jpg")

# Generate prediction by invoking the deployed endpoint

prediction = pytorch_model.predict(image=uri)['prediction']

Run Prediction with oci raw-request command¶

Model deployment endpoints can be invoked with the OCI-CLI. This example invokes a model deployment with the CLI with a torch.Tensor payload:

torch.Tensor payload example¶

>>> # Prepare data sample for prediction and save it to file 'data-payload'

>>> from io import BytesIO

>>> import base64

>>> buffer = BytesIO()

>>> torch.save(input_batch, buffer)

>>> data = base64.b64encode(buffer.getvalue()).decode("utf-8")

>>> with open('data-payload', 'w') as f:

>>> f.write('{"data": "' + data + '", "data_type": "torch.Tensor"}')

File data-payload will have this information:

{"data": "UEsDBAAACAgAAAAAAAAAAAAAAAAAAAAAAAAQ ........................

.......................................................................

...AAAAEAAABQSwUGAAAAAAMAAwC3AAAA0jEJAAAA", "data_type": "torch.Tensor"}

Use file data-payload with data and endpoint to invoke prediction with raw-request command in terminal:

export uri=https://modeldeployment.{region}.oci.customer-oci.com/ocid1.datasciencemodeldeployment.oc1.xxx.xxxxx/predict

oci raw-request \

--http-method POST \

--target-uri $uri \

--request-body file://data-payload

Expected output of raw-request command¶

{

"data": {

"prediction": [

[

0.0159152802079916,

-1.5496551990509033,

.......

2.5116958618164062

]

]

},

"headers": {

"Connection": "keep-alive",

"Content-Length": "19398",

"Content-Type": "application/json",

"Date": "Thu, 08 Dec 2022 18:28:41 GMT",

"X-Content-Type-Options": "nosniff",

"opc-request-id": "BD80D931A6EA4C718636ECE00730B255/86111E71C1B33C24988C59C27F15ECDE/E94BBB27AC3F48CB68F41135073FF46B",

"server": "uvicorn"

},

"status": "200 OK"

}

Copy prediction output into argmax to retrieve result of the image prediction:

>>> print(np.argmax([[0.0159152802079916,

>>> -1.5496551990509033,

>>> .......

>>> 2.5116958618164062]]))

258

Example¶

from ads.common.model_metadata import UseCaseType

from ads.model.framework.pytorch_model import PyTorchModel

import numpy as np

from PIL import Image

import tempfile

import torchvision

from torchvision import transforms

import urllib

# Load a pretrained PyTorch Model

model = torchvision.models.resnet18(pretrained=True)

model.eval()

# Prepare Model Artifact for PyTorch Model

artifact_dir = tempfile.mkdtemp()

pytorch_model = PyTorchModel(model, artifact_dir=artifact_dir)

pytorch_model.prepare(

inference_conda_env="pytorch110_p38_cpu_v1",

training_conda_env="pytorch110_p38_cpu_v1",

use_case_type=UseCaseType.IMAGE_CLASSIFICATION,

force_overwrite=True,

use_torch_script=True

)

# Download an image for running inference

url, filename = ("https://github.com/pytorch/hub/raw/master/images/dog.jpg", "dog.jpg")

urllib.request.urlretrieve(url, filename)

# Load image

input_image = Image.open(filename)

preprocess = transforms.Compose(

[

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

]

)

input_tensor = preprocess(input_image)

input_batch = input_tensor.unsqueeze(0) # create a mini-batch as expected by the model

# Check if the artifacts are generated correctly.

# The verify method invokes the ``predict`` function defined inside ``score.py`` in the artifact_dir

prediction = pytorch_model.verify(input_batch)["prediction"]

print(np.argmax(prediction))

# Register the model

model_id = pytorch_model.save(display_name="PyTorch Model")

# Deploy and create an endpoint for the PyTorch model

pytorch_model.deploy(

display_name="PyTorch Model For Classification",

deployment_log_group_id="ocid1.loggroup.oc1.xxx.xxxxxx",

deployment_access_log_id="ocid1.log.oc1.xxx.xxxxxx",

deployment_predict_log_id="ocid1.log.oc1.xxx.xxxxxx",

)

# Generate prediction by invoking the deployed endpoint

prediction = pytorch_model.predict(input_batch)["prediction"]

print(np.argmax(prediction))

# To delete the deployed endpoint uncomment the line below

# pytorch_model.delete_deployment(wait_for_completion=True)